8 minutes estimated reading time

This post fell out of a conversation I had about mobile applications in particular SnapChat. The idea of generational user experience effects came from my own experience of consumer electronics. This crossed over from wired and analogue devices through to the present day, which provides me with a wide perspective on how things have changed.

My parents grew up in an environment where the four most complex devices they would have been exposed to as a child were a watch or clock, the household radio, a sewing machine owned by the local seamstress and the piano or organ in the parish church.

Form follows function

I was just old enough to remember electricity coming to the family farm were my Mum grew up. The 1960s vintage Bush TR82C radio still ran off a battery until the mid-1980s. This provided the agricultural mart price changes and weather forecast, as well as the musical entertainment on a Saturday night. Non-rechargeable batteries were relatively expensive and battery operated devices where used sparingly.

My Dad saw electronics enter industry, where previously electro-mechanical systems and pneumatic circuits had driven simple processes that would now be governed by a microprocessor.

They were fine with new appliances and even the new 1970s Trinitron TV with touch controls; hi-fis and kitchen appliances usually had neatly labelled buttons that may have had logic controls rather than the physical ‘clunk’ of a mechanically operated mechanism behind them.

This is the kind of generational user experience that Dieter Rams developed. The nature of the design if done well made the operation seem self evident.

Modal interface design

The problems started to come with digital watches and VCRs (video cassette recorders). The user experience in these devices were different than anything that had gone before. VCRs and digital watches were like the computers of their day modal in nature.

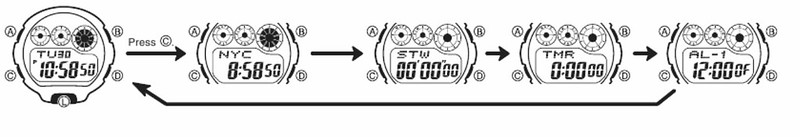

You had to understand what mode a device was in before you could know what pushing a given button would do. In my case this wasn’t an intuitive experience, but I got there by reading the manual. If you own a G-Shock or similar Casio watch, you still experience this modal experience, this is the reason why a G-shock comes with a user manual the size of two packs of gum.

My Dad had the head to deal with these technologies but didn’t have the time to go through the manuals. In the late 1980s / early 1990s Gemstar launched a simple way of programming the video with the correct time and channel with a PIN number for each programme that was between six and eight digits long. It was known by different names in different regions; in Europe it was called VideoPlus. And it was easy enough for anyone who could use a touchpad phone to grasp. Panasonic launched a rival system based on scanning barcodes that wasn’t successful, though programming sheet still goes for £10 or so on eBay.

VideoPlus allowed me to skip duties as the household VCR programmer. But I didn’t get away from modal interfaces.

Menu driven interfaces where all the rage with friends digital synthesisers. None more than the Yamaha DX-series, which not only had a complex way of creating sounds and a byzantine menu system of accessing them. Knobs and dials in interfaces were expensive, menus driven by software were virtually free once the software was written – and the microprocessors to drive them continued to drop in cost. This was one of the main reasons why albums from that time often credited someone with being a ‘MIDI programmer’. From a manufacturing point of view robotic pick-and-place machines that automated the manufacture of consumer electronics (until the rise of the hand-assembled Chinese electronics from Foxconn) were an added driver for having ‘dial-less’ circuit boards.

During the day, I worked with a range of computers at work and my first email account was on a DEC VAX as part of the All-In-1 productivity suite; think of it as a Google services type application on a private cloud with a ‘command line’ like interface that operated on the same modal principles as the VCR or digital watch.

All-In-1 had a simple email client, word processor, a ‘filing cabinet’ – think of it as Google Drive and the front end of business applications – we used VAX for stock management and to order supplies.

Given the spartan interface, it seemed appropriate that I learned how to touch type on an application for the VAX – mainly because after you had read the newspaper cover-to-cover there wasn’t much to do on a night shift.

We had a few other computers in the labs for running test equipment, usually some sort of DOS, a couple of Unix-variant boxes (HP, SGI and a solitary Sun Microsystems machine), an Amiga (because they had handy features for video) and Macs.

WIMP

I naturally gravitated towards the Mac. Once you got the hang of the relationship between the movements of the mouse and the cursor on screen, the interface of Windows Icon Mouse Pointer (WIMP) was remarkably similar to the form follows function design of analogue consumer electronics. Interface design aped real-world button designs, folders and filing cabinets, even waste paper baskets. Even the spreadsheet mirrored a blackboard grid used at Harvard University to teach business students.

Once one you had got used to the WIMP environment it was remarkably simple. More complex devices required menus but for many applications, once you knew some basic rules you were up and running. Part of this was down to Apple laying down interface standards so cmd Q meant quit an application, cmd C meant copy, cmd X meant cut and cmd V meant paste in any programme.

This was something that Microsoft took as a design lesson for themselves when making Windows, however it was interesting that they started to break these rules in applications like Outlook.

Things became more complex with applications like Adobe PhotoShop which became so feature rich, it meant that there was more than one right way to achieve a particular task, so instruction manuals tend to be of limited value.

The leap from WIMP to hyper-media was a small one, the act of clicking on a link was relatively easy. What one didn’t realise at the time was the new world this opened up. We went from interlinked documents to surreal worlds created in Macromedia Flash and similar authoring tools on CD-ROMs and eventually the web. An immersive experience was promised that was never fully delivered mainly because we expected William Gibson’s Sprawl trilogy to be our manifest destiny.

Icons under glass

In the early 1990s Newton had pioneered a simple version of the icons-under-glass metaphor that consumers would really take to heart with the iPhone and later Android devices. The Newton was too ambitious for the technology available at the time. The Palm series of devices pointed out the potential of icons-under-glass as a metaphor. The Palm V can be scene as a conceptual model for the modern smartphone.

With a metallic case, slim lithium ion battery that was not removable and a bonded construction were all eerily reminiscent of the industrial design for the iPhone models rolled out some eight years later.

The launch of the iPhone marked a sea change in consumer adoption if not technology. Apple built on the prior generations of touch screen devices and improvements in technology to update the experience. They made one choice that made the iPhone stand out from its competitors, dominant player Nokia made devices that were designed to be used one handed – phones with a computer inside. Apple flipped it around so that it was selling a computer that happened to do phone things as well. When you went into a shop, it had a bigger screen and a more polished interface so was great for sales demonstrations.

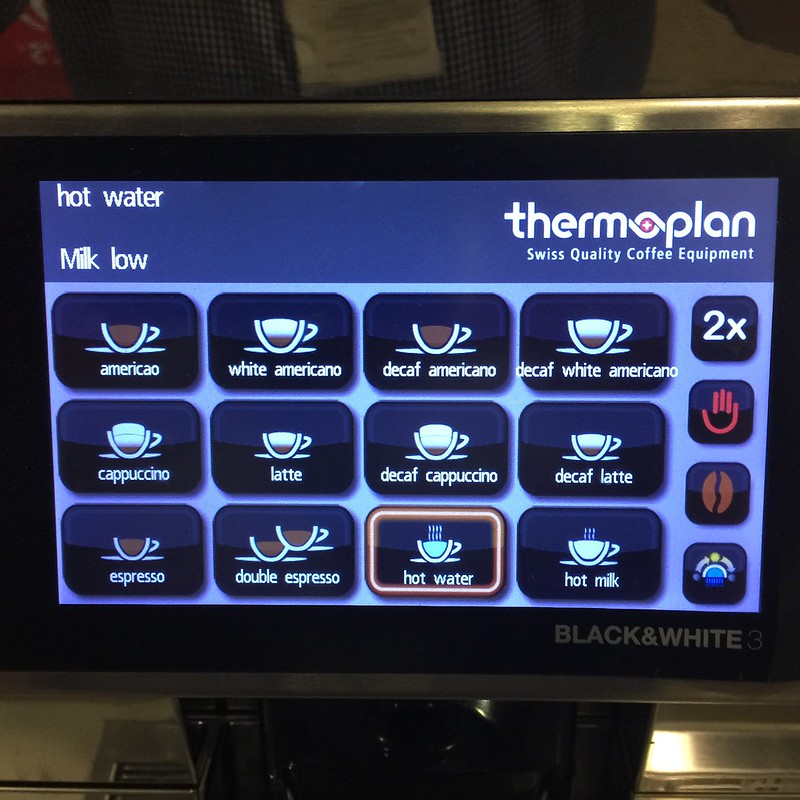

Eventually the technology started to appear everywhere. The coffee machine at work has an iOS like interface complete with skeuomorphic icons for buttons.

Social interfaces

In some ways, mobile interface design aped existing analogue devices. But things started to change within applications. Designers started to build applications that focused on a particular use case, which made sense given the software feature bloat that had happened on desktop applications and even web experiences like Facebook. Most social app designers haven’t managed to squeeze as much functionality out of their real estate as WeChat/Weixin. You then started to see the phenomena of app constellations where non-game single purpose apps deep linked to other applications.

Designers started to take a minimal approach, to cut down ono the screen real estate taken up by controls.

Instead controls only appeared in what might be broadly termed a contextual manner. The only difference that applications which have contextual menus tend to ‘telegraph’ the options and offer a help section in the app.

I am not sure when it started but Snapchat is a prime example of this phenomena of the ‘social interface’. Their interface features are not explained by a design or manual but are more like cheat codes in a game, shared socially. It feels like a fad, minimalism taken to an extreme, a design language that will move on yet again.

More information

VCR Programming: Making Life Easier Using Bar Codes | LA Times

Quick History of ALL-IN-1 | The Museum of Email & Digital Communications

Jargon watch: app constellation